Rethinking the Feed: Giving Users a Say in Their Algorithms

For a long time now, the algorithms that power our social media feeds have operated like a black box. Platforms decide what we see, read, and watch behind closed doors. While this has been incredibly effective at keeping us scrolling, we're starting to see a shift in how people feel about it.

Increasingly, users are experiencing algorithmic burnout. The old model of simply being fed content is losing its charm; people want to have a hand in the recipe.

Here is a look at why opening up the algorithmic engine might be a good idea, and practically, how platforms could actually pull it off.

Part I: The Case for Algorithmic Transparency

Moving from a "trust me" model to a "show me" model isn't just about giving users a gimmick; it’s about addressing some very real frustrations with how we consume content today.

* **Getting Unstuck from Loops:** Right now, if you engage with a heavy news event, the algorithm often assumes this is your permanent new interest. If we exposed the parameters, users could manually reset this. Instead of the algorithm assuming you're obsessed, you could just tell it, "I'm looking to focus today, turn the tension down."

* **Slowing Down the Burnout:** Most algorithms optimize for short-term engagement, prioritizing intense or reactionary content. Giving users control allows for long-term satisfaction. If someone notices they are in an echo chamber, they could turn up a discovery setting to naturally introduce new topics.

* **Rebuilding Trust:** When people feel guided by an invisible hand, they get cynical. Giving users a steering wheel restores a sense of agency, making them feel less like a metric being tracked and more like a customer being served.

Part II: Naming the Controls (Without Overwhelming the User)

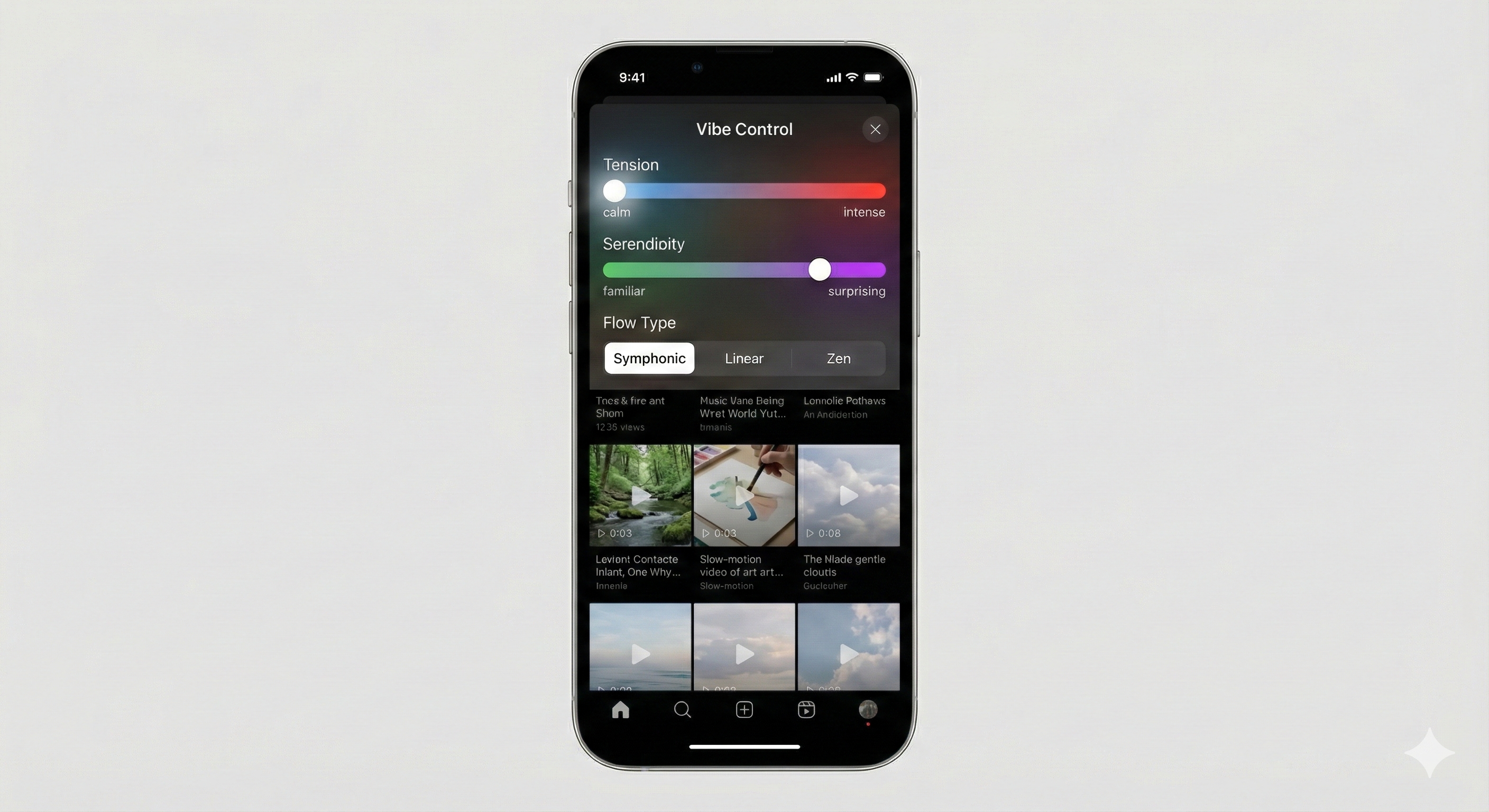

If we actually gave users control, the biggest challenge would be keeping the interface simple. We don't want feeds to look like an airplane cockpit. Here are some user-friendly ways to package complex machine-learning weights into simple sliders:

1. "The Rhythm Dial" (Pacing & Flow)

Content doesn't always have to be a flat line of high intensity. This dial would control the overarching "beat" of the feed:

* **The Standard Feed:** Ranked purely from most to least engaging.

* **The Symphony:** A rhythmic flow that mixes high-energy posts with calmer "palette cleansers."

* **The Deep Dive:** Dedicates the majority of the feed to a single, complex topic.

* **The Wash:** A temporary mode that clears out tension and only shows calming or educational posts.

2. "The Horizon Slider" (Discovery vs. Familiarity)

This slider controls how much unknown content you see. Turned all the way down, you only see what you already follow and like (High Familiarity). Turned all the way up, the feed becomes an exploratory tool, pulling in topics and creators completely outside your normal bubble (High Discovery).

3. "The Intensity Capper" (Tension Filtering)

Imagine every piece of content has a baseline intensity score from 1 to 10. Users could set a daily cap. If you set your cap at 5, heated debates and stressful news are filtered out for the day, replaced by moderate-intensity content like hobbies or travel videos.

4. "The Balance Mixer" (Topic Satiation)

This tool tracks how much of a certain subject you've consumed in a single session. For example, you could set your Mixer to limit political news to 15% of your feed. Once you hit that cap, the algorithm simply stops showing it for the day and swaps in other interests.

Part III: Under the Hood — How to Build It

Making this work requires fundamentally changing how a platform's recommendation pipeline operates. Modern feeds typically use a two-stage process: **Candidate Generation** (finding 1,000 potentially relevant posts out of billions) and **Ranking** (scoring and ordering those 1,000 posts for your screen).

Here is how engineers would integrate user controls into that pipeline:

Step 1: Multimodal Embedding (Tagging the "Vibe")

To filter by "intensity" or "tension," the system needs to understand more than just hashtags. Platforms would use multimodal AI models to scan the video, audio, and text of a post and convert it into a dense vector (a list of numbers representing the content's meaning).

Instead of just tagging a video as "Politics," the AI assigns metadata for emotional resonance: `[Topic: Politics, Sentiment: -0.8, Tension: 8.5]`. This happens the moment a video is uploaded.

Step 2: Multi-Objective Optimization (MOO)

Currently, a ranking algorithm's primary goal is maximizing a single objective, usually (the probability that a user will click, watch, or comment).

To give users control, engineers would implement a Multi-Objective Ranker. Instead of a single score, the final ranking is a mathematical balancing act. The scoring function would look something like this:

Here, the variables , , and are not hardcoded by the platform; **they are the literal representations of the user's sliders.** If a user turns down "The Intensity Capper," the system increases the weight of , heavily penalizing high-tension posts so they drop to the bottom of the queue.

Step 3: Client-Side Re-ranking (Instant Feedback)

If a user moves a slider, they shouldn't have to refresh the page to see the results. To make the UI feel responsive, platforms could send "batches" of diverse content to the user's phone in advance. When the user adjusts "The Horizon Slider," the app simply re-sorts the batch locally on the device, providing an instant, seamless shift in the feed's vibe.

Summary

The era of the "Black Box" feed might be reaching its natural limit. Users today are a bit more exhausted and a lot more tech-savvy than they were a decade ago. They don't necessarily want an algorithm that claims to know them better than they know themselves; they just want an algorithm that listens.

By exposing a few simple parameters, labeling them intuitively, and rebuilding the ranking pipeline to accept user inputs, we could make social media a bit less anxious and a bit more intentional.