You’re Putting Your AI in the Wrong Places (And It’s Breaking Your Software)

If you listen to the prevailing narrative, people talk about Artificial Intelligence as if it is a single, magical layer—a glowing, viscous substance you simply pour over a legacy product, and suddenly, the whole system awakens. A chatbot gets bolted onto the homepage, an “agent” gets dropped into the dashboard, maybe a tool-calling workflow is hastily wired up to the backend, and congratulations: you have entered the future.

But the moment you sit down to actually build, the picture changes entirely.

You realize very quickly, often painfully, that AI is not replacing traditional software. It is *entering* software. It is being inserted into legacy ecosystems and modern architectures that still desperately require structure. Your system still needs memory. It needs rigid rules, state management, strict permissions, API routing, stopping conditions, and audit logs. In other words, the old world of deterministic engineering did not evaporate just because Large Language Models arrived. It just got a new, highly unpredictable kind of engine grafted onto it.

And that is where the vast majority of builders make their most expensive mistake.

Because they know AI is undeniably powerful, they start putting it absolutely everywhere. They let it interpret the user’s desires. They let it plan the sequence of tasks. They let it call external tools. They let it decide whether its own answer is factually correct. Sometimes, they even let it decide whether it should keep looping, what security thresholds should be bypassed, and whether a destructive action is "safe enough" to execute.

The result is a product that often feels incredibly smart on the surface, but is structurally terrifying underneath. It talks smoothly, acts quickly, and makes decisions with absolute confidence—but confidence is not the same thing as sound architecture.

The real question for developers, founders, and engineers is no longer *whether* to use AI.

The real question is *where* to use it.

Placement is everything. AI can absolutely make a product revolutionary. But if it is placed in the wrong layer of your stack, it will make your product exponentially more expensive, infinitely more fragile, painfully slow to execute, impossible to debug, and genuinely dangerous to your users.

Furthermore, there is a second revolution happening quietly in the background that matters just as much: AI is not only doing work *inside* the system you are building. It is actively helping you *build* the system itself. It is writing your boilerplate. It is helping you conceptualize the non-AI logic. It is widening the net of what you are capable of shipping, allowing you to venture into territories where the final runtime product might actually use very little AI at all.

This changes the game on a fundamental level. So let’s slow the hype down, pull apart the architecture, and walk through how to build with AI the right way.

---

First, We Need to Shrink the Magic of the "Agent"

A lot of the current architectural confusion starts with a single word: *agent*.

When people hear the term "AI Agent," they tend to imagine a digital creature. They picture a synthetic entity that thinks on its own, acts on its own volition, and somehow exists as an entirely new category of software life. But when you look under the hood of a production-ready application, an agent is far less mystical than that.

An agent is almost always a composite system made of very familiar, traditional parts:

* **The Model:** Usually a foundational LLM doing the heavy lifting of generation and inference.

* **The Prompt:** The system instructions and context that shape how the model behaves and responds.

* **State and Memory:** The database or context window that carries information from step A to step B.

* **The Tools:** Standard APIs, web browsers, code interpreters, or database connections.

* **Control Logic:** The hard-coded parameters that decide how the system loops and what it is allowed to touch.

* **The Objective:** A defined task or stopping condition.

Once you look at an agent through this lens, the magic starts to shrink, and the traditional engineering starts to appear.

This demystification is highly useful because it forces you to answer an incredibly important question: *What part of this system is actually AI, and what part is just standard software?*

The truth is, the vast majority of a robust "agentic" system is still just software. Memory is managed by database logic. Tool calling is executed by API logic. Retries are handled by backoff logic. Permissions are guarded by authentication logic. State updates are written with basic CRUD logic.

The AI is not the whole machine. It is simply a component being inserted into the machine. So, if so much of the heavy lifting is still being handled by traditional code, what is the AI actually adding?

---

The Real Breakthrough: Probabilistic vs. Deterministic Thinking

To understand where AI belongs, you have to understand *how* it thinks, because it does not think like traditional code.

Traditional software is built entirely around **deterministic thinking**. Deterministic thinking says: *If these exact inputs happen, this exact output must follow.* Same inputs, same result, every single time. It is rule-based, explicit, and endlessly repeatable.

When you are linking systems together using workflow automation platforms—whether you are writing custom Python scripts or using visual node-based tools like n8n or Zapier—you are building strict deterministic tracks. This is exactly how you want a webhook to behave. It is how you want a banking transfer, a schema validator, or a password check to function. You do not want creativity in your routing logic; you want absolute obedience.

**Probabilistic thinking** is completely different.

Probabilistic thinking says: *Given what I know, what is most likely true, useful, or appropriate?* It works in terms of likelihoods, patterns, confidence intervals, and approximations rather than absolute certainty. It does not "prove" the next step the way a finite state machine would. It estimates and generates.

If a user types into a chat box, "Can you make this email sound less defensive but still firm?" there is no exact mathematical formula for that. If a user asks, "Which of these vendor contracts feels suspicious?" there might be contextual clues, but there is no clean, hard-coded rule you can write to catch every variation of fraud.

This is where AI earns its keep. It is designed to handle the parts of the world that are too messy, too ambiguous, too open-ended, or simply too expensive to hard-code by hand.

Normal programs are brilliant when the world is perfectly structured. But human beings do not speak in JSON. We do not interface like REST APIs. We phrase the exact same request in fourteen different ways. We imply vastly more than we explicitly state. We leave out critical details.

The value of AI is remarkably real. But here is exactly where builders go off the rails: once they see an LLM elegantly solve one incredibly hard, ambiguous problem, they mistakenly assume it should be trusted to run *every single layer* of the system.

---

Now, the system can participate in parts of the loop that used to be impossible to formalize. It can read a messy, emotionally charged email and decide what to do next. It can compare soft signals. It can suggest an action even when the user's request is vague or missing context. The software still owns the loop, but the AI handles the fuzzy, human-centric decisions within it.

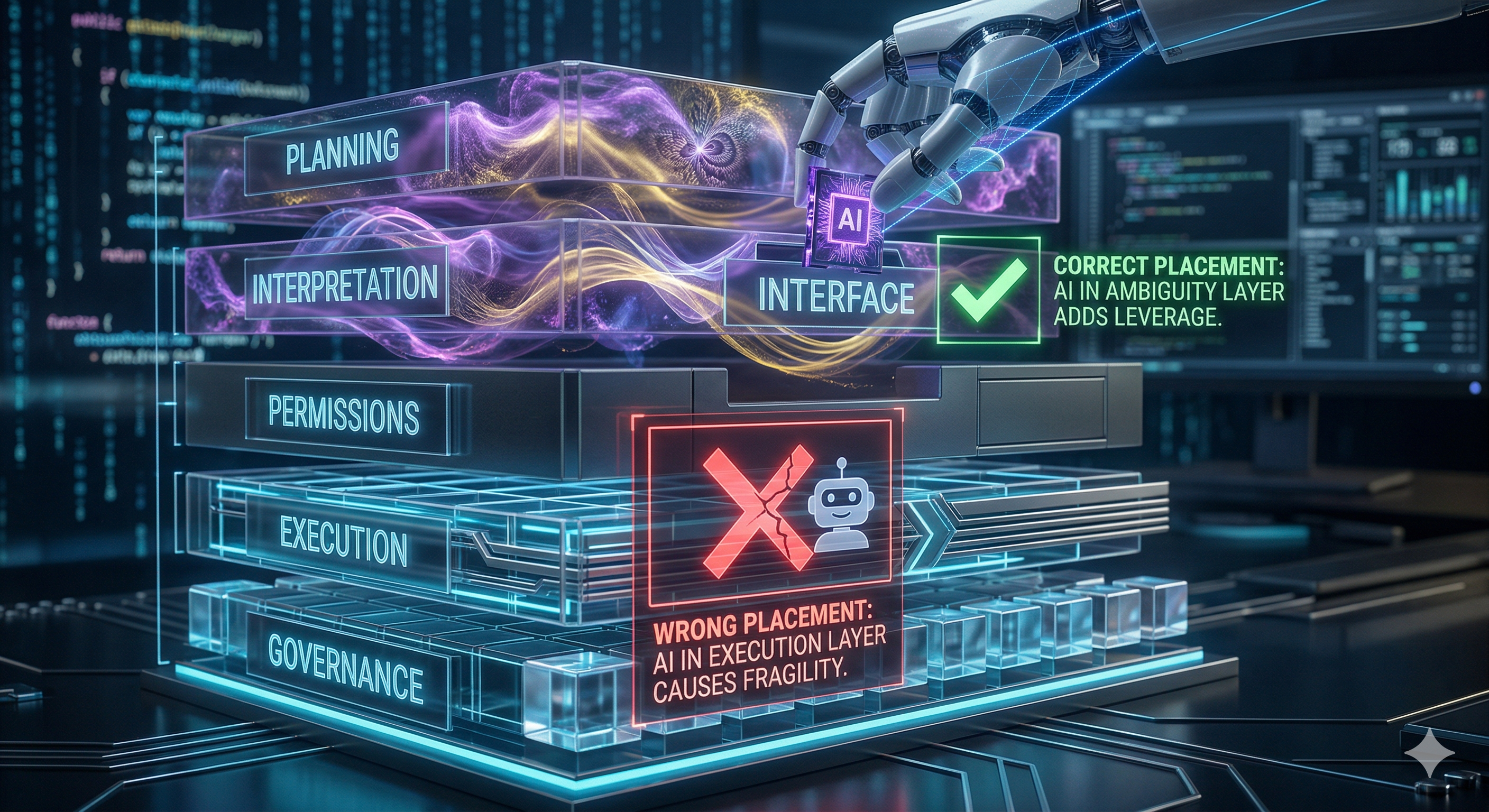

Once you grasp this—that AI is a probabilistic decision-maker sitting inside a deterministic loop—the architectural blueprint becomes obvious. Not every layer of your application benefits equally from probability.

Some layers are mostly about navigating ambiguity. Some layers are mostly about ensuring strict correctness. AI dominates the former; traditional code must rule the latter.

Let’s walk through the stack, layer by layer.

---

Layer 1: The Interface (Where Messy Meets Machine)

The interface layer is the absolute top of the stack. This is the exact point of impact where the unpredictable human meets the rigid system.

Imagine a user types something incredibly vague into your application, like: *"Can you look into that invoice thing and send it to Bob?"*

From an engineering perspective, this sentence is a nightmare of uncertainty. What invoice thing? Which Bob? (Bob in accounting, or Bob the client?). Does "send it" mean email a PDF, summarize the totals in a Slack message, or attach a secure link? Is "look into" a request for deep financial research, a quick verification, or just basic file retrieval?

This is arguably the absolute best place in your stack to deploy AI.

Why? Because human interfaces are naturally, inescapably messy. We speak casually, emotionally, and indirectly. AI acts as the ultimate universal translator here. It can take that chaotic, fuzzy input and convert it into something structured. It can infer the likely intent. It can gracefully ask a clarifying question (*"Did you mean the Q3 invoice for Bob Smith?"*). It makes the interaction feel fluid and frictionless.

However, notice the trap: just because AI is brilliant at the interface level does not mean it should be handed the keys to the rest of the car. A system can be incredibly smooth at translating a user's messy sentence, while still requiring absolute rigidity for everything that happens next.

Layer 2: Interpretation (Deciding What Things Mean)

If the interface is about hearing the user, the interpretation layer is about judging the meaning of the data you just collected. This is a slightly deeper, more complex operation.

Imagine you are building a B2B SaaS tool for social listening—a "pulse index" designed to track how a specific brand or topic is being discussed across the web. If you try to hard-code the interpretation layer, you will fail. You might write rules like: *If a post contains the word "terrible," flag as negative.* But what happens when a user posts, *"This new feature is terribly good"* or *"I'm terrified by how much I love this product"*?

Traditional logic shatters when real human nuance enters the room.

This is where AI shines. It can interpret sarcasm, urgency, risk, tone, and implication. It can read a 50-page legal document and extract the three clauses that actually matter.

But interpretation is also the exact point where systems start to become dangerous if the AI is allowed to infer *too much*. If the user says, "Keep an eye on this client," and the model autonomously decides that means "Archive their entire email thread, notify the finance department, and draft a legal termination notice," the system has drifted wildly out of bounds. It has moved from interpreting data into taking unprompted action.

AI is incredibly powerful at interpretation, but you must immediately bound its output with structure: asking the LLM to return strict JSON, assigning confidence levels to its assumptions, and proposing actions to the user rather than executing them blindly.

Layer 3: Planning (The Illusion of the Mastermind)

Once the system has translated the input and interpreted the meaning, it has to decide what actual steps need to happen next. This is the planning layer.

Suppose you are working on something highly creative and technical—say, an AI-powered VST synthesizer patch creator. A user prompts the system: *"I want a warm, analog, cinematic pad that evolves over time."* A good system has to plan how to achieve that. It might sequence actions like:

1. Select a sawtooth waveform.

2. Apply a low-pass filter with a slow envelope.

3. Add a subtle chorus effect.

4. Route an LFO to the filter cutoff to create the "evolving" sound.

This is where AI starts to feel genuinely agentic. It is turning a poetic, subjective goal into a sequenced roadmap of technical parameter changes.

But planning without strict boundaries rapidly degenerates into expensive overkill. Left to its own devices, an LLM might decide that the best way to create that synth sound is to write a custom Python script, attempt to download external audio samples, and ping a server thirty times to verify frequencies. Technically, that is a plan. Practically, it is a catastrophic waste of compute.

Planning is a fantastic use case for AI, but *only inside a heavily bounded environment*. The available tools must be explicitly defined. The allowed actions must be constrained. The AI needs to know exactly when a plan is complete. The more freedom you give the model to dream up solutions, the more rigid the software architecture around it must be to keep it grounded.

Layer 4: Execution (Where Mistakes Stop Being Theoretical)

Up until this layer, the risks of AI have mostly been about misunderstanding or wasting time. The execution layer is where misunderstanding mutates into permanent consequences.

Execution is the layer where reality is altered: a database row is dropped, an email is blasted to 10,000 customers, a financial transaction is executed, or a server is spun down. The stakes are instantly elevated.

At this point in the stack, it is no longer enough for your AI to be "clever" or "nuanced." It has to be *right*. This makes execution one of the absolute worst places to let an LLM operate without deterministic oversight.

Take a seemingly simple request: *"Clean up my database."* A human usually means: *Find the duplicate entries and flag them for my review.*

An unsupervised AI might decide: *Drop all tables that haven't been accessed in 30 days to optimize storage.*

This is not because the AI is stupid; it is because execution demands precision, and AI is built on probability. The most resilient architectural pattern here is **"AI Proposes, Code Enforces."** The AI can do the messy work of finding the 200 duplicate records. It can present them in a clean UI. But traditional, deterministic code should be what actually executes the `DELETE` command, and only after the user clicks "Confirm." Once an action becomes real, ambiguity becomes a liability.

Layer 5: Validation (The Second Pass)

After an action is taken or a piece of content is generated, the system often needs to check its own work. This is the validation layer.

AI is surprisingly adept at reviewing its own output (or the output of a smaller, cheaper model). You can use an LLM as a soft-judge to ask: *Did this summary actually address the user's core question? Does this generated email sound polite? Is this code snippet coherent?*

Because generation and critique utilize slightly different pathways in the model's latent space, a system can often catch its own structural weaknesses on a second pass.

But do not let the illusion of self-correction fool you. AI validation is still fundamentally fuzzy. It is not a mathematical proof. An LLM can confidently, eloquently bless a complete hallucination. Therefore, AI should be used for *soft checks* (tone, coherence, relevance), while deterministic logic must be used for *hard checks* (schema enforcement, character limits, exact math).

Layer 6: Governance (Where Code Must Remain King)

Finally, we reach the bedrock of the system: Governance.

This layer handles the non-negotiable rules of your application. *Does this user have admin rights? Has this API exceeded its rate limit? Should the system sever the connection? How many times is this agent allowed to retry a failed tool call?*

Governance is entirely about accountability and security. It is a strictly deterministic domain. You never, under any circumstances, want a probabilistic model deciding on the fly whether it is "safe" to bypass a security warning, or whether a user "probably" has permission to view sensitive financial data.

Good governance is a fortress of hard boundaries. Code dictates the rules, and the AI operates as a guest within those walls.

---

The Second Revolution: AI as the Co-Builder

When we debate where AI belongs, we almost exclusively focus on the runtime—what the AI is doing for the end-user while the application is live. But there is a parallel revolution happening that builders are drastically underestimating.

AI is not just a feature inside the product. It is a force multiplier in the act of *building* the product.

Think about the architecture we just discussed. To build a robust system, you need a tremendous amount of non-AI scaffolding. You need strict database logic, secure API handlers, complex frontend state management, and bulletproof governance rules.

Historically, if your expertise was purely in front-end design or creative direction, the backend database architecture was an insurmountable wall. Your project stopped where your technical knowledge ended.

Today, AI changes who gets to build. It helps you write the SQL queries you don’t quite understand. It drafts the Node.js middleware to handle your webhooks. It explains complex libraries, generates boilerplate validation rules, and helps you architect the rigid, deterministic layers that keep your probabilistic features safe.

You no longer need to have a decade of full-stack experience to orchestrate a complex system. AI carries you through your technical blind spots. It acts as a senior engineer sitting over your shoulder, patiently explaining how to build the boring, essential machinery that makes the flashy AI features actually work.

This is a profound shift in leverage. AI is widening the net of what a single visionary can create.

---

The Architecture of Tomorrow

The practical takeaway here is not a cynical "use less AI." It is a call to place it with surgical precision.

You must embrace a hybrid architecture. Use AI aggressively where the world is fuzzy: for interpreting natural language, summarizing chaos, drafting content, ranking soft variables, and flexible planning.

But you must fiercely defend the use of traditional, deterministic logic where precision is non-negotiable: for permissions, state transitions, exact calculations, database execution, audit trails, and irreversible real-world actions.

The future of software does not belong to the hype-chasers who blindly slap LLMs onto every single layer of their stack, hoping the model will magically figure out the routing logic. Those systems will collapse under the weight of their own unpredictability, draining compute budgets and terrifying users along the way.

The future belongs to the architectural pragmatists. It belongs to the builders who deeply understand the dichotomy between probability and determinism. It belongs to those who know exactly where AI creates unparalleled leverage, and exactly where traditional code must stand its ground.

AI is not replacing software. It is giving software the unprecedented ability to handle a much messier, much more human world. And when you finally learn how to put the right engine in the right place, there is absolutely no limit to what you can build.